Crawl Errors: Google Helps You Clean House

Google Search Console provides a wealth of information regarding the visibility of a site in Google. Google has been continuously updating Search Console to offer more ways of monitoring crawl errors and other issues that impact how your domain is indexed. Google’s Core Web Vitals, Page Experience, and other tools help identify possible issues and inform strategies to improve site performance.

How To Index Your Site on Google Search Console

Google Search Console allows you to submit a sitemap for indexing. We’ve got a great piece on finding, organizing, and updating your XML sitemap if you need a quick refresher. It’s a relatively quick process to index your website on Google and certainly worth the time invested. Indexing provides a literal map for the Google Site Crawler to follow. These crawlers (affectionately known as spiders) are constantly scanning billions of web pages to understand the content of each individual URL. Making your domain easier to crawl ensures that spiders can find and analyze every page faster!

Why Is Google Not Indexing All My Pages?

A site with broken links not only hinders the user experience but can also prevent search engines from finding important site content. To crawl a site online, Google needs to be able to find all your webpages and not get hung up on technical errors.

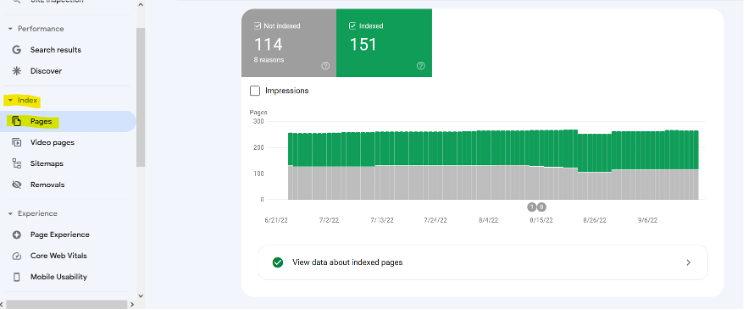

The page indexing feature in Google Search Console provides the information needed to find these broken links and correct them. This feature is found on the dashboard under Index > Pages. It shows you how many pages are indexed and not indexed:

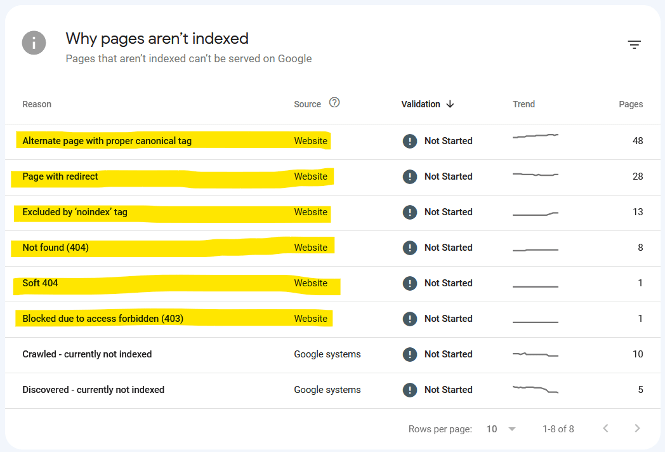

Scrolling down allows you to see a list of reasons pages weren’t indexed. Reasons with a source listed as “Website” are problems you and your team can tackle to get the site crawled as it should be.

Some, like “Excluded by ‘no index’ tag” and “Alternate page with proper canonical tag” were likely purposefully excluded to trim down your site’s crawl budget. Still, they’re worth checking over to make sure the correct pages are being displayed.

A Quick Note: Not Every Page Should Be Indexed

It’s best practice to block most cart or checkout pages, admin access URLs, and customer or account log-in pages from being indexed using a robots.txt file. Most CMS platforms like WordPress and Shopify will automatically add these types of pages to your robots.txt file, but you can add additional pages on your own.

Next Steps for Fixing Un-crawled or Non-indexed Pages

The status code of these non-indexed pages provides a to-do list to improve site performance. These types of fixes are typically included as a part of a technical site audit. A TSA uses Google Search Console and other tools to see error codes like:

404 Errors – 404 Not Found pages mean both the site and potential visitors are missing out on the benefit of those links. Correct these broken links by submitting a 301 redirect so they point to functioning, relevant pages to reestablish the relevancy those links were originally meant to provide.

Creating 301 redirects also resolves other problems. After a site goes through a redesign and URL rewrite, it’s more than likely to have links from other websites pointing to old URLs. Using a 301 redirect, external sites linking to old pages will land on a page you’ve chosen rather than a 404 page without having to contact the other site to update the link.

403 Errors – Check over redirected links and “access forbidden” 403 errors to make sure you really don’t want those pages crawled. If you don’t, mark them as “noindex” to save on crawl budget.

Correcting a website’s broken links, both inbound and internal, will help improve the user experience. When visitors enter a site and are immediately presented with a 404 page, it doesn’t make for a good first impression. This goes for internal navigation as well. Users met with 404 or 403 pages are likely to leave and lose trust in your brand.

Related: The Big, Bad List of SEO Terms You Need to Know

Can I Check If a URL Is Indexed in Google?

Yep! You can spot-check specific pages in Google Search Console to make sure they’re being indexed. Simply copy the page URL and paste it into the main search bar on GSC. This will give you a snapshot of how Google sees and indexes that specific page. For sites with thousands (or tens of thousands) of URLs, this is a quick way to check specific pages without scrolling through the full list of URLs.

Okay, I’ve Fixed My Links. Now How Often Does Google Index?

If you have a particularly active site, Google may crawl it up to every four days. If you don’t update your site all that often, the crawl interval can stretch up to 30 days. That’s another reason to create and publish quality content that provides value to your audience and sends freshness signals to Google. Posting interesting blogs or updating product or service pages tells Google your site is serious!

How To Make Google Crawl Your Site

If you want to make sure Google sees your fixes sooner rather than later, request a Google crawl through the URL Inspection tool, though you’ll need to be an owner or full user to do this. Remember, requesting a Google crawl doesn’t automatically send an army of crawlers to your domain immediately. Think of it more as raising your hand and letting Google know there’s something new for it to look at.

Get The Fix on Google Search Console

If you’re looking to clean house and fix broken site links, Core Web vitals and other site performance metrics, the page indexing feature of Google Search Console is a great resource. Let us take it off your to-do list; we’ll perform a technical site audit to find all that info for you and present it wrapped in a neat little bow. Call us at (231) 922-9977 or submit a contact form to learn more.